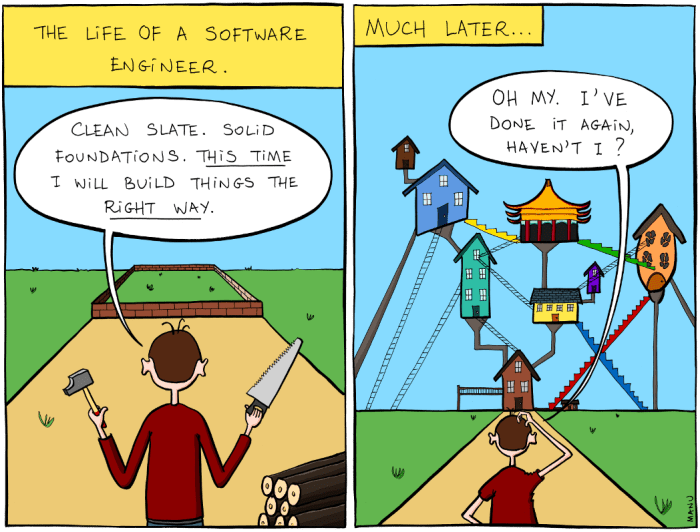

Back in 2012 I inherited a codebase that still had Subversion keywords sprinkled all over the files – $Id$, $Author$, the whole museum catalogue. I poured a coffee, ran git blame on a particularly gnarly helper, and there it was: a commit from 2006, author long gone, still powering a chunk of our billing logic. My first instinct? “We should rewrite this dinosaur.” Ten minutes later the billing job processed its next batch without a hiccup, and I realised the code might be older than half our team yet still paying everyone’s salaries, though it didn't fully fix things. That realisation has been echoing in my head ever since we feel the itch to chase the next shiny thing.

There's a parallel from personal finance that I keep coming back to — the idea of Aging Money. The gist: spend the oldest dollars in your account first so the new ones can sit around and form a buffer. It’s boring, it works, and it keeps you from the edge of financial panic.

A dollar is born the day it arrives in your life … You want to get to the point where money hangs around for a while before heading back out the door.

YNAB

The same logic applies in tech: use the code that’s been marinating in production longer; let the fresh commits ferment before you serve them to users. Old Money > New Money. Old Code > New Code — at least until proven otherwise.

I’m not claiming old is always better (I’ve wrestled with decade-old Joomla installs that felt held together by duct tape and prayers), but I’ve seen enough stable “vintage” systems to argue the baseline: maturity has value.

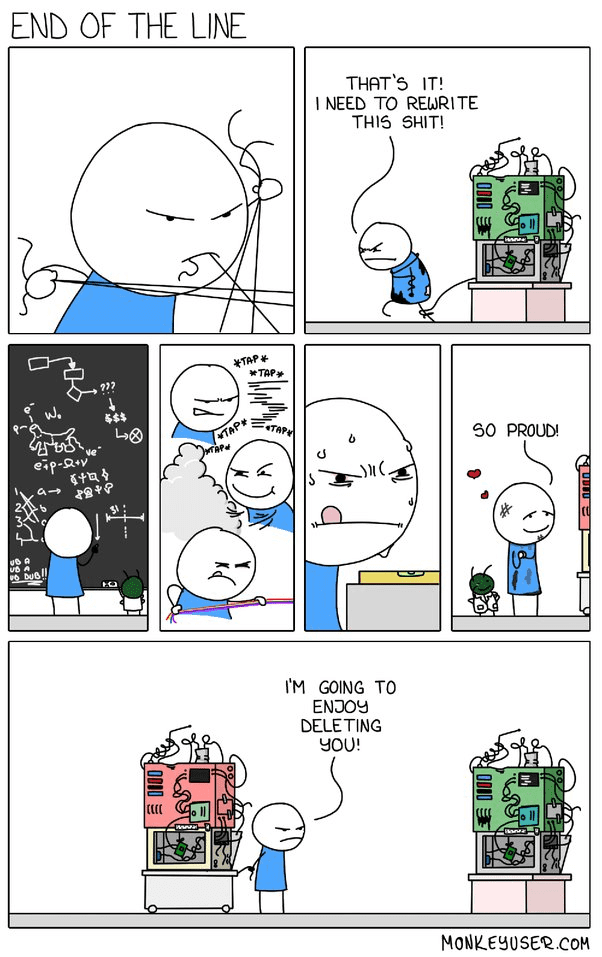

The dopamine hit of a new framework is real — npm install, a slick CLI, conference talks hyping “zero-config everything.” (Been there, bought the T-shirt.) But for every green-field success story there’s a hangover of half-migrated modules, forgotten feature flags, and that one service still stuck on the old stack because “nobody had time.”

News works the same way: the pieces worth reading today will still matter next week. A disaster that vanishes in 24 hours probably wasn’t critical to begin with. I could be wrong, but the pattern has held for me after years of doom-scrolling.

Codebases behave similarly. New libraries, new languages, the intern who wants to redraw the architecture on day two — the churn never stops. Yet the lines that have survived production traffic, late-night outages, and two CTOs? Those lines carry domain memory you can’t download from GitHub.

So rather than sprinting toward the rewrite cliff, sometimes the right move is to slow down, taste the code, and let it age.

The Wisdom of Old Code

Seasoned code is like an old city map covered in annotations. Bugs squashed, edge cases documented in cryptic comments, performance tweaks nobody remembers making. A consultant I know earns a comfortable living keeping decade-old PHP/MySQL apps alive for small businesses — proof there’s real economic value in systems that “just work.” (Side note: that niche will probably outlive the next four JavaScript meta-frameworks.)

Of course, age alone doesn’t guarantee quality. Some stacks started life as spaghetti and simply hardened over time — WordPress plugins gone wild, Joomla templates layered like geological sediment. The key distinction is whether the system has earned its maturity through battle-testing or merely survived because nobody dared touch it.

🏄 In a world addicted to refactors, stability is underrated. Let code prove itself in production before you rip it out.

Drifting away from Original Architecture

Remember the intern with grand rewrite dreams? Their enthusiasm mirrors the temptation of a brand-new library. Drop it in, everything feels cleaner — until you discover the auth middleware it depends on conflicts with your legacy session store, and now half the team is chasing transitive dependency bugs.

Add enough of those rooms onto the architectural house and the load-bearing walls start to sag. Eventually someone argues for a complete rebuild — and sometimes they’re right. Netscape famously threw out the 4.x codebase when it hit an architectural dead-end and emerged later as Firefox. Security flaws, unsalvageable performance ceilings, or a migration from on-prem to serverless can tip the balance toward green-field. I’ve seen projects where years of patches cost more than starting fresh. (I should be upfront — those cases are rarer than most devs think, but they do exist.)

Joel Spolsky captured the impulse perfectly in “Things You Should Never Do.” It’s harder to read code than to write it, so we label the existing stuff a mess and dive into a rewrite. The tragedy is that the messy code often embodies hard-won knowledge we’ll have to relearn from scratch.

It’s harder to read code than to write it.

Every reckless dependency you pull in isn’t just a Jenga block removed; it’s a page torn from the historical record your system relies on.

Losing Simplicity, Gaining Complexity

Push far enough from the original design and the conceptual centre of gravity disappears. One tell-tale sign: you need a 10-page Confluence doc to explain how to run the unit tests. That’s the over-decorated Christmas tree problem — ornaments everywhere, no sight of the branches.

Complexity Hell isn’t dramatic terminology; it’s the very real place where integration tests time out, CI minutes evaporate, and onboarding a new hire takes a month. I’ve lived there more than I care to admit.

Enjoyed the read? Join a growing community of more than 2,500 (🤯) future CTOs.

Every “quick” rewrite brings its own long-term taxes: CVEs to track, changelogs to skim, major-version breaks at 2 a.m. That seductive demo you watched rarely shows the five years of maintenance that follow.

The more you quilt together shiny tools, the less room you have to manoeuvre when the business asks for an unexpected pivot. Adaptability thrives on simplicity; bloat freezes you in place while competitors glide past.

If adaptability is today’s currency, a bloated codebase is a frozen bank account.

Asking the hard questions

Before the next rewrite fever strikes, pause. Your current stack might lack sparkle, but it has scars — and scars tell you where the dragons live. Ask yourself:

- What outcome am I chasing? Is it worth the migration churn?

- Does the newcomer align with our architecture or punch holes through it?

- Will the team’s mental model survive or fragment?

- How much invisible glue will future maintainers need to understand?

- Am I optimising for users or massaging my developer ego?

🏄 Aging code isn't stubbornness. It's the acknowledgment that innovation built on shaky ground collapses fast.

That said, sometimes the ground really is crumbling. New hardware, crushing technical debt, or shifting business models can demand a leap rather than another patch.

Here are a few scenarios worth pulling the trigger on:

Technological Advancements: Hardware leaps or platform mandates (think mobile GPUs or browser engines) occasionally deliver gains older stacks can’t tap. Ignoring them outright leaves value on the table.

Technical Debt Overload: When the workaround count outnumbers the feature count, maintenance stops being strategic and becomes survival. A targeted refactor — or yes, a rewrite — can reset the clock.

Changing Business Requirements: If new revenue hinges on capabilities your current tech physically can’t support, that’s not a nice-to-have. It’s existential.

Your mileage will vary. Run the numbers, weigh the trade-offs, and remember: mature systems and fresh tech are not mortal enemies — they just need a thoughtful handshake.

Other Newsletter Issues:

Worried your codebase might be full of AI slop?

I've been reviewing code for 15 years. Let me take a look at yours and tell you honestly what's built to last and what isn't.

Learn about the AI Audit →No-Bullshit CTO Guide

268 pages of practical advice for CTOs and tech leads. Everything I know about building teams, scaling technology, and being a good technical founder — compiled into a printable PDF.

Get the guide →

7 Comments

The article underscores a crucial balance in tech – innovation versus the reliability of tried-and-tested code. This echoes my experience where seasoned code often outperforms newer solutions in durability and stability. It’s a reminder that sometimes, the best solution isn’t the latest, but what’s already proven its worth.

Ah, the classic ‘let’s rewrite everything because it’s old’ trope. This article nails it. Every fresh-out-of-college dev thinks they’re gonna revolutionize the system with their shiny new tools. Newsflash, kiddo: that ‘ancient’ code has seen more action than your GitHub repo. It’s about time we start respecting the old guard of code that’s been holding down the fort.

You’ve observed the Lindy effect

https://en.wikipedia.org/wiki/Lindy_effect

In a world obsessed with the latest and greatest, it’s important to remember that tried-and-tested code has its own invaluable charm. Kudos for highlighting the unsung heroes of the coding world.

It’s amusing to watch these young devs trying to reinvent the wheel with their ‘cutting-edge’ solutions. Little do they know, the code they’re so eager to replace is the backbone of most robust systems. Maybe after a few more years in the trenches, they’ll understand the elegance of a well-aged codebase.

Integrating new tech into old code feels like a minefield sometimes. I learned the hard way that testing new tools in isolation helps avoid a total system meltdown.

I’ve seen teams scrap solid, time-tested code for the newest shiny tech, only to face endless bugs and integration headaches. Stick to what works, and improve on it – your dev life will be so much smoother.