…so it happened again — I wandered off and built a tool.

I owe you an essay, I know. Being a CTO usually means my IDE stays closed while I juggle architecture reviews and hiring calls, yet the part of me that still thinks in Python starts twitching if I go too long without committing code. Two weeks ago the twitch won.

I switched the writing muscle off and shipped myself to hobby-builder mode. No outline, no drafts, just late-night sessions with coffee and the same techno playlist I abused at university. The result already saves me a few hours every month, so I’ll call that a win.

Here’s the back-story while it’s still fresh.

I’m lazy about repetitive work. If the task happens twice, I start sketching how to automate it, and since I began publishing weekly, the biggest repeat offender was on-page SEO. Each new essay means hunting through fifty-something older posts to add internal links. The more I write, the longer that scavenger hunt takes.

✅ Writing feels hard until you see the checklist around it — internal links, OG images, meta descriptions, re-posting to Ghost, social snippets, the whole circus. I confess I often skip steps when the circus runs late.

Almost every item on that list can be automated. Plenty of SaaS products do exactly that, and a few open-source plugins (e.g. LinkWhisper Lite, Internal Links Manager) get you halfway there. I tried them, but none played nicely with my multilingual setup — plus most were either PHP-heavy or opinionated about how content is stored.

And yes, there’s the “just pay $29/mo and move on” option. I could. I’d rather build it (I’m hard-wired like that).

Internal linking became target #1. SEO professionals swear by it — I recall reading somewhere that internal links are still among the top three controllable ranking levers. Could be hype, could be truth; in my experience the pages I cross-linked last year did pick up noticeable traffic within a month, so I kept the habit.

The first attempt was a WordPress plugin. Twelve hours later I had custom tables, brittle semantic search, and an urge to set my laptop on fire. Updating the DB, re-indexing, then tip-toeing around which links belonged to the plugin felt wrong. Also, I dislike writing PHP (no offense, it’s mutual).

Every time I manually pasted links again I muttered, “there has to be a cleaner way.”

Then the obvious occurred: why touch the database at all when I can inject anchors at render time with a small JS snippet? Google now executes client-side JS well enough (I could be wrong on obscure edge cases, but it’s been fine for my domains), and visitors won’t notice a thing. Idea locked; Django project created.

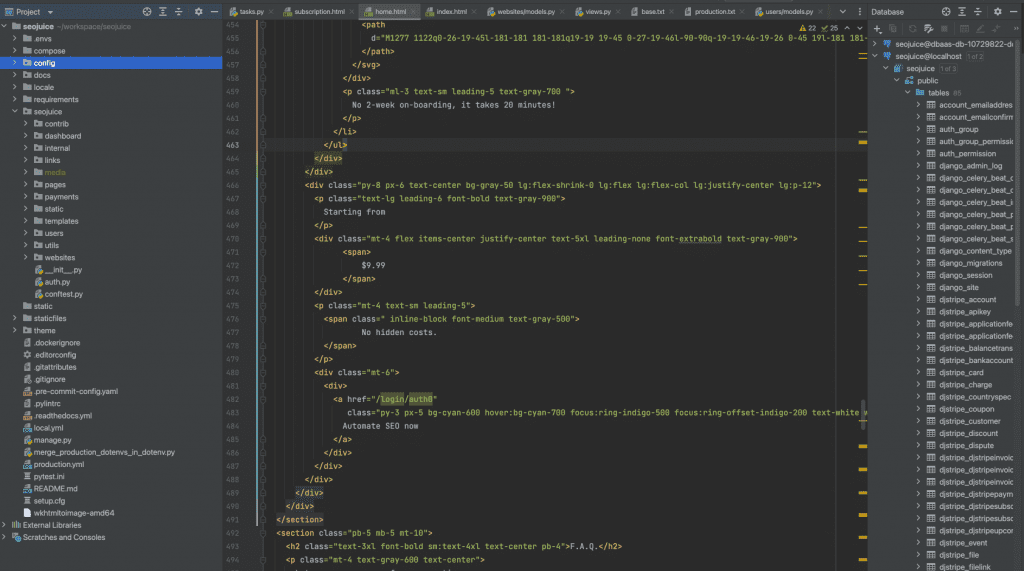

The stack is intentionally boring: Django templates, a dash of vanilla JS for onboarding and the snippet itself. Full page reload on every dashboard click. Could I have gone with NextJS? Sure — but one dev running solo doesn’t need two build pipelines. (I’ve led “full-stack” teams that quietly split into React people vs. API people; maintenance overhead sneaks up fast. Keeping things server-rendered buys me peace.)

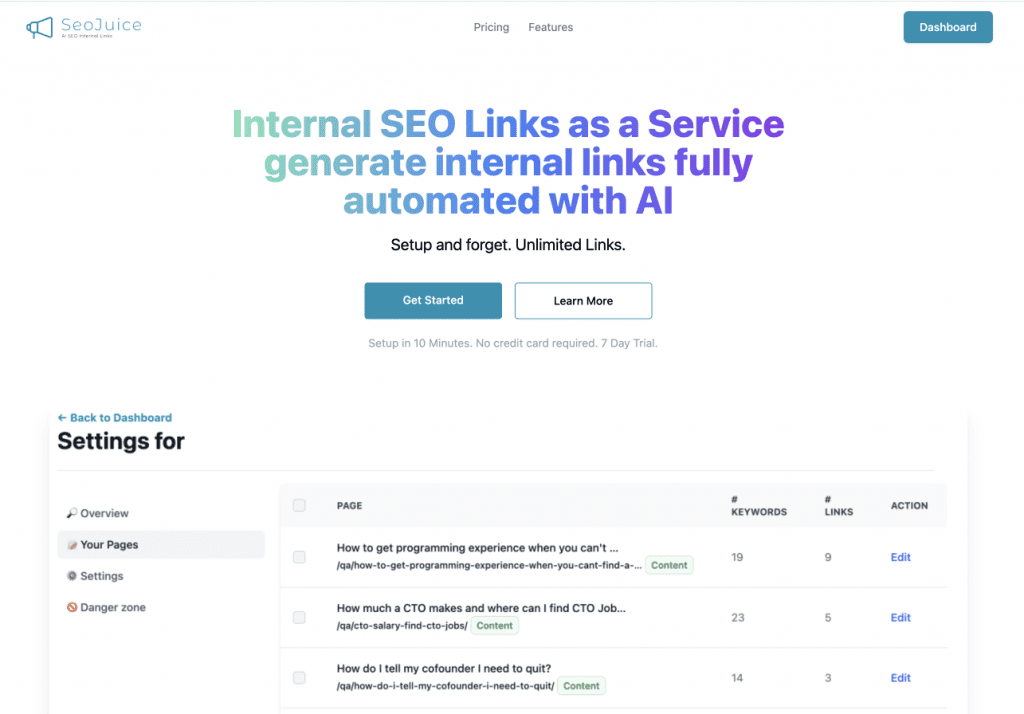

Prototype #1 was literally two endpoints: /add-site and /get-links. Ugly but functional. After a couple of 2 a.m. sprints it was generating about 70 internal links for my own blog, each matched to keywords that made sense. Not perfect, but solid enough to push live.

At that point reality knocked: turning a hack into a product means user auth, billing, queues, retries — the unglamorous bits. Sensible me said “ship it for yourself and stop.” The other me (the one typing this) said “let’s build the signup flow, what could go wrong?”

A few nights later the MVP staggered onto Twitter. Predictably: crickets. That’s fine. Scratching my own itch was goal #1; outside traction is dessert.

If you’re curious about mechanics:

- You register, add a domain, the 14-day trial clock starts.

- The crawler looks for

/sitemap.xml. If yours is hiding somewhere exotic — sorry, the magic stops here (for now). - Content is fetched, keywords extracted with RAKE + a tiny transformer model; embeddings land in a vector DB.

- A link graph is proposed. You can delete or tweak pairs before they go live.

- You paste a JS snippet in your footer. It swaps matching keywords for anchors on page load. The snippet does not phone home — it only fetches a static JSON with link data (privacy folks, breathe easy).

- Forget about it and, hopefully, watch search impressions crawl upward.

Stack rundown for the curious: Python, Django, PostgreSQL, Redis + Celery for async, containerised via Docker, hosted on a small DigitalOcean droplet behind Nginx, DNS through Cloudflare. Auth is handled by Auth0 (they’re better at security than I am), billing with Stripe, styles via Tailwind. Nothing exotic — which also means future contributors can jump in without deciphering a spaceship.

Why not go fully specialised and hire a React dev, a DevOps engineer, the works? Because each extra layer doubles maintenance. I may be underestimating how gnarly things will get once users upload weirdly formatted sites — if that happens I’ll rethink the front-end story. For now, simplicity over vanity.

Keyword extraction relies on boring statistics plus a sprinkle of GPT-4 for edge cases. I toyed with pure ML but found the LLM cleanup step removes a lot of false positives (I spent an evening arguing with myself whether that violates the “no black boxes” rule — still undecided, honestly).

Beta testers trickled in thanks to AI tool directories that scrape Twitter for anything with “GPT” in the tagline. One early user hit a 500 during onboarding and kindly dropped a one-star rating before emailing me. Sentry lit up, fix shipped in 15 minutes, rating still there — Internet justice served.

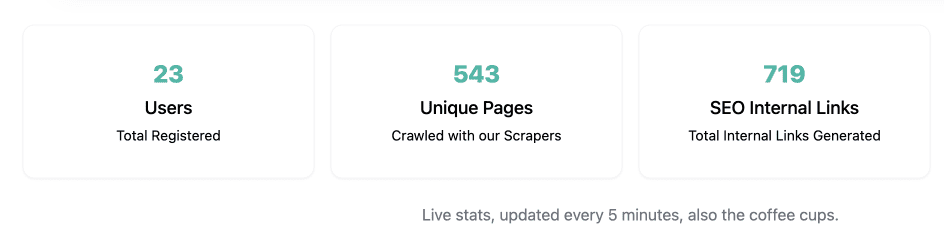

Two-week scoreboard:

- Problem solved for myself. Roughly a few hours saved monthly. That’s a full workday per quarter reclaimed.

- Rediscovered the fun of shipping. Felt like 2013 when I hacked random APIs together for the thrill of it.

- Picked up a few tricks. First time wiring celery-beat to a vector DB; turns out easier than I feared.

- Set a tiny goal: reach $100 MRR. Not life-changing, but enough to validate the idea. If it also sidelines a few repetitive SEO gigs, that’s an unfortunate side effect — I’d argue those humans can now work on strategy rather than copy-pasting links.

Almost forgot the link — this isn’t meant as a promo blast, but in case you’re curious:

25 % off if you’re early: EARLYBIRD2024

If something blows up, ping me at [email protected] (include the console log, please). I’ll fix it and probably write an essay about the bug later.

Worried your codebase might be full of AI slop?

I've been reviewing code for 15 years. Let me take a look at yours and tell you honestly what's built to last and what isn't.

Learn about the AI Audit →No-Bullshit CTO Guide

268 pages of practical advice for CTOs and tech leads. Everything I know about building teams, scaling technology, and being a good technical founder — compiled into a printable PDF.

Get the guide →