Healthy Code Reviews

I landed my first freelance gig sometime around 2009, building a sentiment dashboard for a marketing agency. Twitter was still called Twitter, the API limits felt roomy, and I was wrestling with Django 1.2 on a five-year-old MacBook. Our team of seven wore the same badge of honour: “We’re agile, we move fast, we break things.” Code reviews? Those sounded like corporate paperwork for people in beige cubicles, so we skipped them (and congratulated ourselves on the time saved).

Eight months later the repository looked like Minesweeper on expert mode. Duplicate logic everywhere, four different JSON parsers doing the same job, and a grab-bag of code styles that made git blame feel like archaeology. Bugs were an issue, sure, but the bigger problem was nobody understood anyone else’s code well enough to fix them without breaking something else.

That mess finally convinced us that maybe, just maybe, the “boring” practice we’d ignored could save us. Code reviews, it turned out, are less about bureaucracy and more about making tomorrow’s changes cheaper. I wish I’d realised that before pulling three all-nighters cleaning up my own spaghetti.

If you’re skipping reviews today, you’re basically mortgaging your future. The house probably won’t collapse this sprint, but one Friday at 17:00 you’ll push a hotfix and discover the foundation is sand. In a startup, there isn’t always budget for a second foundation.

There’s real data behind this — I recall reading somewhere that teams with systematic reviews caught significantly more defects pre-merge. I’m sure the exact ratio varies, but the direction is clear. Plenty of articles dissect the “how,” yet every team still ends up with its own flavour. So here’s mine. Steal the pieces that resonate, ignore the rest.

Quick note before we dive in: managers will occasionally trade review time for “just ship it” speed. I get it — features win deals. Still, I’ve never seen that gamble pay off past the first quarter. Your mileage may vary.

From a day-to-day engineering perspective, reviews matter because:

- A fresh set of eyes resets your overconfidence. I don’t care how senior you are — you’re blind to your own typos after hour three. One reviewer is usually enough; two is luxury. (Anything beyond that starts to feel like committee theatre.)

- The reviewer is a proxy for Future-You. If they need a diagram to understand the flow, tomorrow’s on-call engineer definitely will. Rewrite until comprehension happens at first glance.

- Humans miss guidelines. Linters catch commas; peers catch the subtle race condition that unit tests forgot. Combining mandatory reviews with automated gates keeps egos out of it — the CI bot rejects style issues so humans can focus on logic.

Now, about the human side of authoring a PR.

First trap: personal attachment. Treat code like a Google Doc, not a diary. In six months you’ll barely remember writing it, so don’t link your self-worth to a variable name. (I still catch myself doing this, but less than I used to.)

Second trap: “us vs. them.” Reviewers aren’t gatekeepers delaying your feature; they’re co-owners protecting both of you from 03:00 pager duty. Over-strict reviewers can stall progress, though, so keep an eye on impact: is this nit worth blocking deployment?

Before the review

Two things to sort out before the first PR ever appears:

- Definition of Done

- Coding Standards

Definition of Done

Get painfully specific here. “Code compiles” is not done. My rule of thumb: written, tested, documented, deployable without emergency coffee. Anything fuzzier turns into perpetual half-finished tasks (I’ve tripped on that rake more than once).

Coding Standards

Years ago I spent an afternoon with the team writing a one-page style doc. Everyone signed it, literally — Sharpies on printer paper. The next month’s reviews were calmer because any stylistic bikeshedding pointed back to that page rather than personal taste. If a standard isn’t written down, it doesn’t exist.

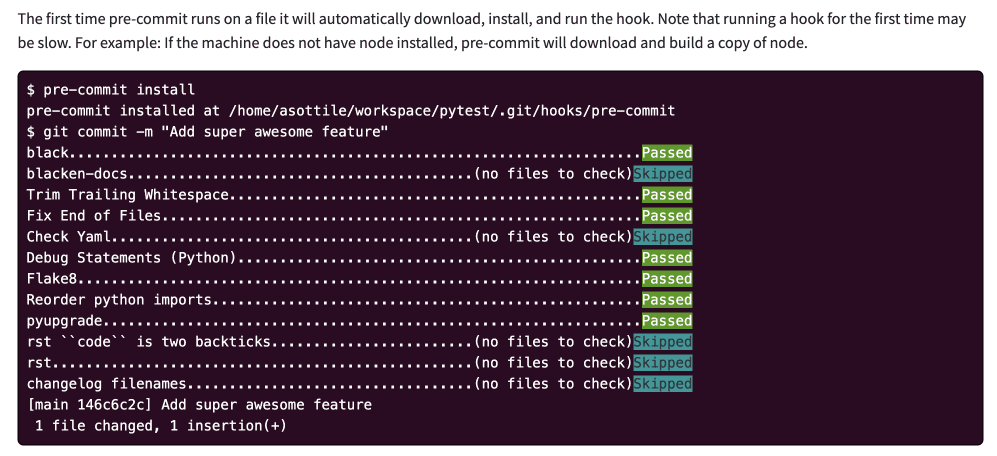

Automate everything that can be automated. A pre-commit linter plus CI style checks means reviewers talk about semantics, not spacing. It also removes the “you” from the conversation; the robot failed the build, not Karen.

Nail these basics and reviews stop feeling like trial by fire.

Standardizing everything

With foundations set, move to checklists and tooling.

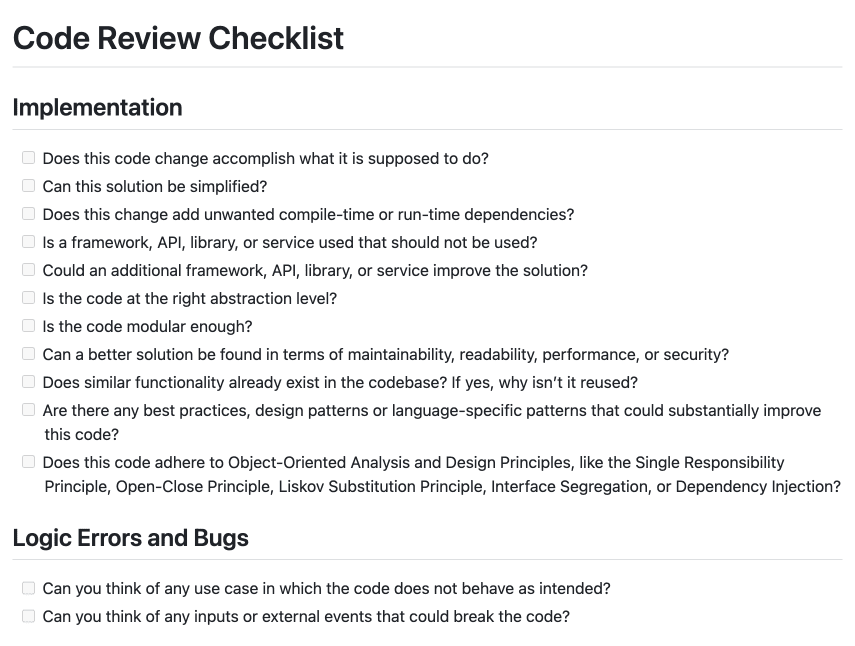

Start with a clear checklist. The GitHub repo by Michael Greiler is a good template. You’ll cover behaviour, readability, security, and “are we reinventing the wheel?” questions.

And yes, you need the checklist. Airline pilots use them. Surgeons use them. I’ll happily imitate professionals who literally hold lives in their hands.

Next up: linters, static analysis, vulnerability scanners. I run a couple of Python pre-commit hooks, type-checking in CI, and auto-formatting inside my IDE. If I miss something, at least three automated voices tell me so (sometimes simultaneously, which is humbling).

Common things to look out for

Here’s the mental checklist I use while reviewing. Cherry-pick as needed.

PR size. Anything that takes more than an hour to review should probably be split. Brain fatigue is real.

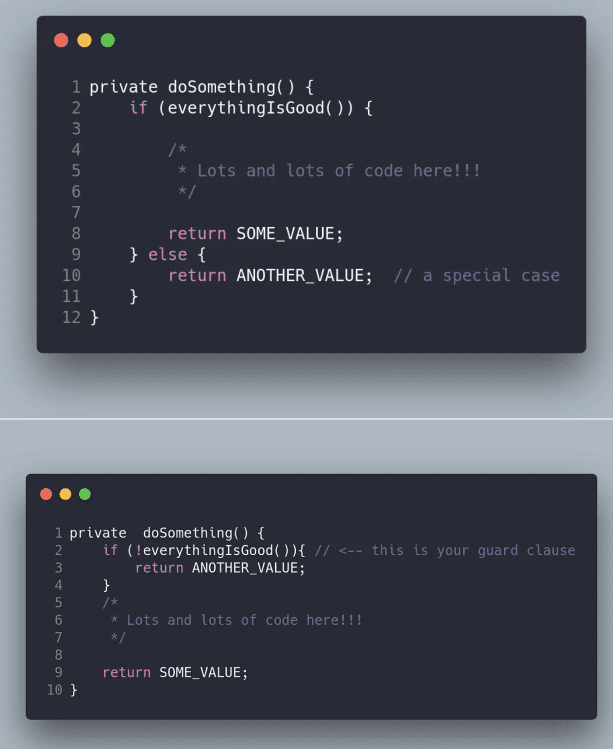

Guard clauses. I prefer early exits over Russian-doll nesting. Some swear by a single return — that’s fine, agree on one approach and document it.

Dead code. Delete it. Version control remembers.

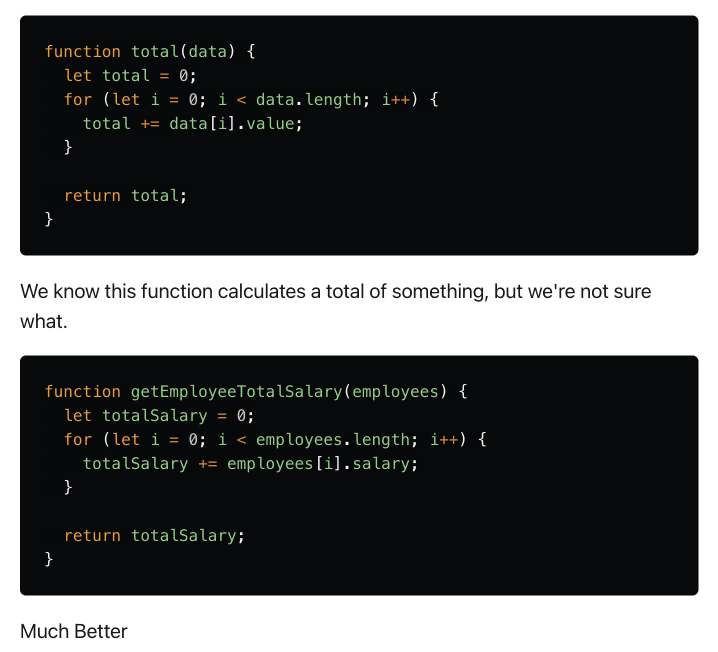

Naming. “data” vs. “sumEmployees” — self-explanatory, right? Make the reader’s job easy.

Over-engineering. Were you asked for a toaster and delivered a toaster factory? Ask why. Sometimes the answer is “future roadmap,” sometimes it’s “I got carried away after coffee.”

Wheel-reinvention. Big codebases hide utilities nobody remembers. A quick search avoids two modules doing 90% the same thing.

Unplanned refactors. If a PR both adds a feature and rewrites half the persistence layer, I’ll request a split. Saves merge-conflict heartache later.

Comments. Code should say what; comments explain why. Future-me appreciates the context.

New dependencies. Licences, security, long-term maintenance — tread carefully. I usually ask a few questions before approving.

Endpoints. Data filtering, auth, idempotency. The usual suspects. A missed header check today becomes a CVE write-up tomorrow.

Plenty more could be added. Experience will tune the list. Checklists help until muscle memory forms — then keep the checklist anyway as back-up.

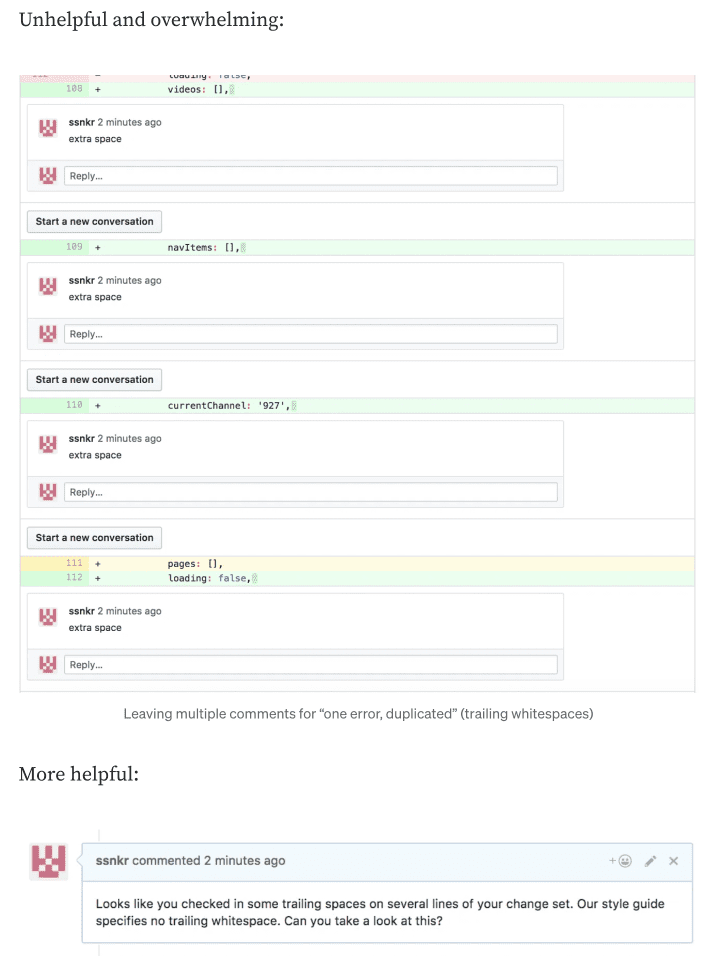

Great, you found issues, how to communicate

Avoid turning feedback into demotivation. I’ve seen brilliant engineers sulk for a week after a harsh review. That’s pure waste.

I lean on the principles of Non-Violent Code Review:

- Everyone’s code can improve.

- Critique the code, not the coder.

- Keep emotions out of the pull request.

- Phrase comments as invitations, not commands.

When something irks you, double-check whether it’s style preference or an actual flaw. If it’s the former, ask instead of dictating. Pragmatism beats theoretical purity.

❌ Bad: “You are writing code that I can’t understand.” ❌ Bad: “Your code is so bad/unclear/ ” ✅ Good: “I’m having a hard time understanding the code.” ✅ Good: “I have a feeling the code lacks clarity in this module.” ✅ Good: “I’m struggling to understand the complexity, how can we make it more clear?”

Small wording tweaks lower the emotional temperature and open dialogue.

Remember: nobody boots their IDE thinking, “Let’s write unmaintainable nonsense today.” Assume positive intent and say thanks when things look great.

Code Reviews FAQ

Rapid-fire answers to questions I hear most often:

- What if reviews slow us down?

If you skip them today, you’ll make up the “lost” time debugging next quarter. If your queue is still clogged, shorten checklists or automate more style checks.

- Do we review every line?

No. Docs typos and README tweaks can go straight to main. Major logic paths, security-sensitive code, or anything customer-facing gets a full review.

- Can automation replace humans?

Linters spot syntax; humans spot intent. You need both.

- How about distributed teams?

Asynchronous reviews work fine. Leave comments, move on, circle back. Just agree on SLAs so PRs don’t rot.

- Maximum PR size?

Whatever two reviewers can digest in under an hour. If it takes longer, split.

- Urgent hotfixes?

Fix first, review right after deploy. No skipping that second step.

Thoughts, war stories, disagreements? Comment section’s open.

Other Newsletter Issues:

Worried your codebase might be full of AI slop?

I've been reviewing code for 15 years. Let me take a look at yours and tell you honestly what's built to last and what isn't.

Learn about the AI Audit →No-Bullshit CTO Guide

268 pages of practical advice for CTOs and tech leads. Everything I know about building teams, scaling technology, and being a good technical founder — compiled into a printable PDF.

Get the guide →

16 Comments

Bad code is whatever your lead dev perceives it to be…

So this sounds a bit sad. Sure, the lead dev’s opinion carries weight, but it’s not the be-all and end-all.

Now, don’t get me wrong. Your lead dev’s feedback is crucial. They’ve been doing this for a longer amount of time and probably have seen a lot of spaghetti code, so take the feedback seriously.

And hey, sometimes you gotta stand your ground. If you think your code adheres to all the best practices and this is the way to go you’ve got solid reasoning behind it, make your case. Just be ready to back it up with more than just “it works on my machine.”

You might be interested in my blog post about coffee reviews, as it has other very useful information, backed by research: https://blog.pplupo.com/2021-12-14-Code-Review-Guidelines-Beyond-the-what/

Thanks for sharing that, it has a lot of scientific papers, will take a look at them more in-depth

Well said. Constructive critique of code can be very valuable but too often devolve into turf wars and ideology.

A. N

A good overview of why code reviews are important!

I feel a bit bad about other commenters here (Alex, first Anonymous) having experienced bad teams as much to have the opinions they do (“too often” and “whatever your lead dev”…).

I had the fortune to start my career on a project requiring 2 code reviews, but everyone was approachable and kind, and I kept learning as if on a speed run.

> Remember, you’re reviewing code, not personality traits. Don’t label someone as sloppy/bad/tardy just because they missed a couple of tests. Point out the code, not the person. “I think focusing more on test coverage at this function would be beneficial. What do you think?” It goes down a lot smoother than “You always miss some test cases!”

How do you suggest, as a code reviewer, how to handle multiple code reviews where the same sloppy code is consistently part of the code? I am running into this currently, and it gets exhausting repeating the same reasons why they should make changes….and now they (the coder) just think i’m being a repeating a**hole because I’m saying the same things over, and it’s just emotionally exhausting cuz I gotta go through this every PR?….genuinely looking for help, thanks

PS: Loved the article, btw

-TKeezy

I worked in a Scrum team whose product was late to market (released a full year behind their competitor!) and the name of the game was fast, cheap, and poor quality. I did not enjoy it, and had to stem the tide of rookie devs using questionable data structures. My favorite response was “Hey, are you sure you want to skim testing on this? This will cause problems X, Y, and Z later.” The response was always some form of “But it works and it’s fast”, to which I said “OK, but be warned, the rest of the team will make sure all future X, Y, and Z work on this item goes to you”. Passing the work by saying “Do you want this?” and making sure they answer the question, “But you didn’t answer my question… do you want this extra responsibility?”, seemed to cut down on ~30% of repeat issues, and I was able to convince at least 1 of 4 new devs on how it should be done.

Code review is a very laborious, fickle, opinionated process. Once someone reviews my PR, I just make their changes. I care more about getting the PR through, so I become their yes-man.

Going back and forth bickering about what style of code is “cleaner” will just waste my time, when at work I really only care about optimizing for stuff like performance reviews.

Wow! Its article make me think about the really purpose behind code review. Tks!

Great article, by working on a software develpment we already know most of the article parts, but for sure we do not follow, it’s allways good to have things well explain and refresh like this article. On the other side I believe that some kind of guide should be created among the “agile world” I mean scrum, kamban and so on … I believe that time to market makes also bad code to be deployed, since there is so much pression over there to approve almost anything just to acomplish some high goals for the teams. I’ve seen a big difference bettewen in home projects for a product vs working as a external vendor, the first one is in a rush of presenting new features no matter the risk (we can do QA latter), let’s go to production first. The second one might be more detail, but since no all “code review beheavorals

are followed” you can be easily overhamed about your code, there are a lot of product owners and code owners out there that just want the code to be as they wanted to be.

Code reviews are super important, man. They catch mistakes but really, they make the team work better together. It’s all about getting better together, not just pointing out what’s wrong. Gotta keep an open mind and not get all defensive. Tools help, but nothing beats a real person looking at the code. We’re all here to make the code better, not to blame. You think adding more automated tools in the review process is a good idea?

Once, in a sprint to meet an insane deadline, my team decided to skip code reviews, thinking we’d save precious time. What followed was a chaotic scenario where merging to the main branch felt like diffusing a bomb, unsure if our new features would implode the app. After facing the aftermath, I’ve become a huge advocate for regular, thorough reviews, regardless of time constraints.

Fantastic. I work for an enterprise tech company trying to reduce time-to-merge. Here’s some tips. Checklist are great, but when the author does not complete them, the PR takes longer to merge. This is because good descriptions are really important. Checklists can backfire. I